Understanding Ensemble Forecasting and NOAA's New AI Models

How ensemble forecasting works, what NOAA's emerging AI weather models mean for local observers, and how your station data fits into the bigger forecasting picture.

Every weather forecast you have ever seen — whether it was a five-day outlook on television or a probability-of-rain percentage on your phone — started as a set of equations running on a supercomputer. What most people do not realise is that those equations are not run once. They are run dozens of times, with slightly different starting conditions, producing a spread of possible outcomes that forecasters interpret as probabilities. This is ensemble forecasting, and understanding it transforms how you read forecasts, how you evaluate your own station data, and how you appreciate the role your backyard instruments play in the larger numerical weather prediction pipeline.

I have been watching the forecasting world closely for the past two decades, first as a station operator frustrated by inaccurate local forecasts, then as someone who understood enough about the machinery to see why the forecasts were wrong — and how they have gotten dramatically better. The AI revolution in weather modelling that is happening right now, led by NOAA's adoption of machine-learning techniques alongside traditional physics-based models, is the most significant shift since ensemble methods themselves were introduced in the 1990s.

This article explains how ensemble forecasting works, what the major models are, how AI is changing the game, and most importantly, how your personal weather station data fits into this picture. If you contribute to CWOP or other networks (as described in the sharing your data guide and the expanding your reach guide), you are already part of the data assimilation chain that feeds these models.

Quick-Answer Summary

| Concept | What It Means | Why It Matters for Station Operators |

|---|---|---|

| Ensemble forecasting | Running a model many times with perturbed inputs | Gives probabilities, not just single predictions |

| GFS / ECMWF / NAM | Major operational NWP models | Different strengths at different scales and ranges |

| Data assimilation | Ingesting observations into model initial conditions | Your station data contributes to the starting state |

| AI/ML weather models | Neural networks trained on reanalysis data | Faster, sometimes more accurate, but less transparent |

| Ground truth | Comparing model output against actual observations | Your station provides verification data |

What Is Ensemble Forecasting?

Think of it like this. You want to predict where a golf ball will land after you hit it. You could model the swing physics perfectly and get one answer. But tiny uncertainties — a gust of wind, a slight mis-hit, a patch of dew on the clubface — mean the ball could land in a range of spots. If you modelled the shot a hundred times, each with a slightly different set of those tiny unknowns, you would get a scatter of landing points. The densest cluster tells you the most likely outcome; the spread tells you the uncertainty.

Ensemble forecasting does exactly this for the atmosphere. A numerical weather prediction model like the GFS (Global Forecast System) solves the equations of fluid dynamics, thermodynamics, and radiation across a three-dimensional grid covering the entire planet. The initial conditions — the starting state of the atmosphere — are derived from millions of observations: radiosondes, satellites, aircraft, surface stations (including yours, if you contribute to CWOP), and ocean buoys.

But those observations have errors. The atmosphere between observation points is interpolated, not measured. So instead of running the model once from one set of initial conditions, forecasters run it many times — each with slightly perturbed initial conditions that fall within the uncertainty bounds of the observations. The NCEP Global Ensemble Forecast System (GEFS) runs 31 members (one control run plus 30 perturbations). The ECMWF runs 51 members.

Spaghetti Plots and Probability Cones

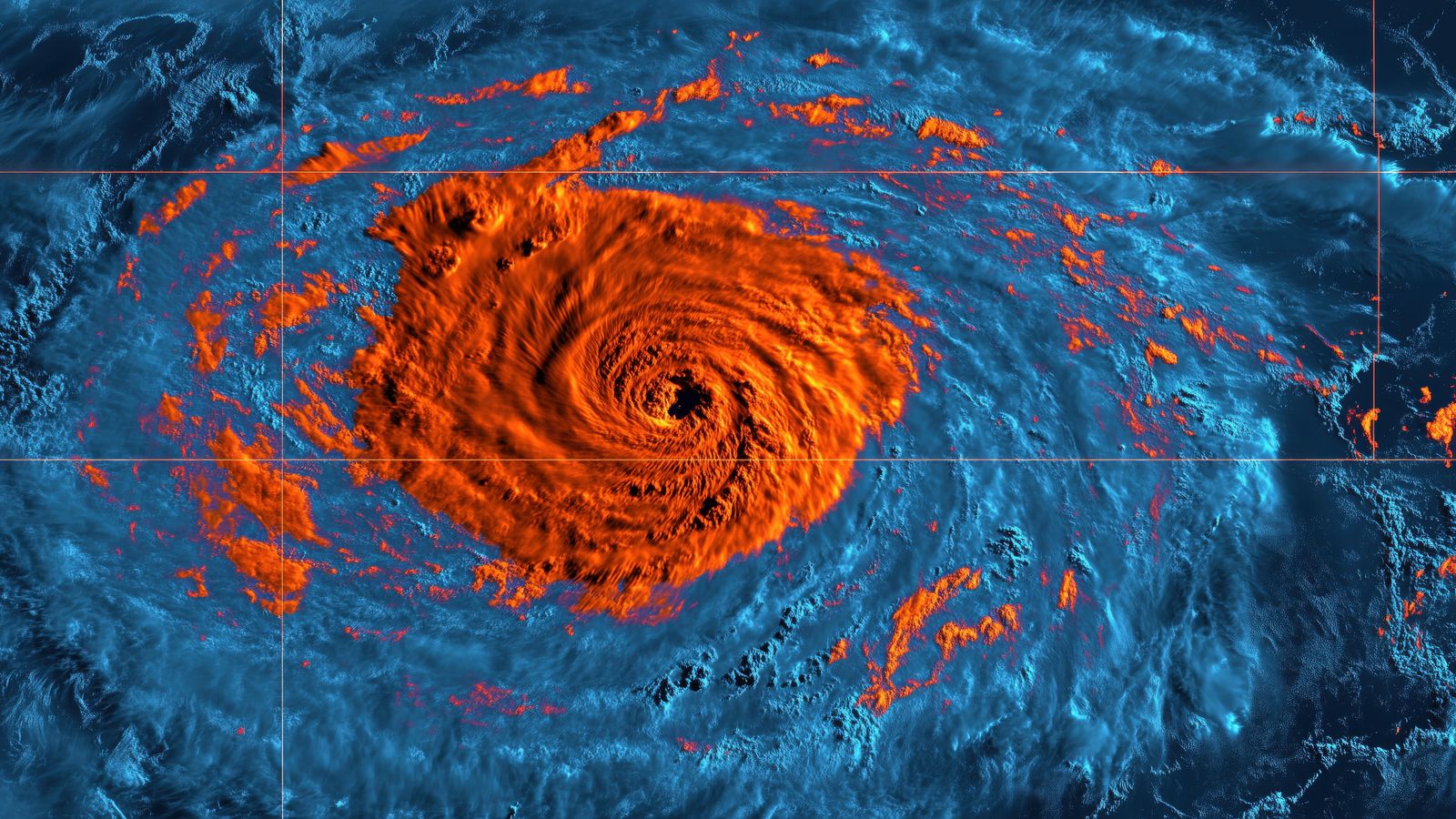

The visual output of an ensemble run is a set of forecasts that diverge over time. Plot the 500 hPa geopotential height from all ensemble members on the same map and you get the famous "spaghetti plot" — a tangle of contour lines that are tightly bundled for the first day or two and spread apart by day five or six.

When the spaghetti strands stay close together, forecasters have high confidence. When they diverge wildly, the atmosphere is in a state where small changes in initial conditions lead to dramatically different outcomes — the classic "butterfly effect" in practice. Tropical cyclone probability cones are derived from exactly this kind of ensemble spread.

Probabilistic vs. Deterministic Forecasts

A deterministic forecast says: "The high temperature tomorrow will be 24 °C." An ensemble-based probabilistic forecast says: "There is a 70% chance the high will fall between 22 °C and 26 °C, with a 15% chance above 26 °C and a 15% chance below 22 °C."

The probabilistic forecast is more useful for decision-making — especially for agriculture, aviation, and emergency management — because it communicates uncertainty explicitly. As a station operator, understanding this distinction helps you interpret forecast verification. When you compare tomorrow's forecast to what your station actually records, a "miss" of 2 °C may actually fall well within the ensemble spread and not constitute a model failure at all.

The Major Models

GFS (Global Forecast System)

NOAA's flagship global model. Runs four times daily (00Z, 06Z, 12Z, 18Z) with a horizontal resolution of approximately 13 km for the deterministic run and 25 km for the ensemble (GEFS). The GFS is freely available and widely used by weather apps, private forecasters, and research institutions worldwide.

Strengths: global coverage, long forecast range (16 days), freely available data. Weaknesses: coarser resolution than regional models, sometimes struggles with mesoscale phenomena like sea breezes and mountain valley winds.

ECMWF (European Centre for Medium-Range Weather Forecasts)

Generally considered the most skilful global model, particularly for medium-range forecasts (3–10 days). Runs at approximately 9 km resolution (deterministic) and 18 km (ensemble, 51 members). ECMWF data is partially restricted — the high-resolution output requires a subscription — but ensemble products are increasingly available.

Strengths: superior medium-range skill, larger ensemble size, advanced data assimilation. Weaknesses: less freely available than GFS, slightly slower to incorporate some observation types.

NAM (North American Mesoscale Model)

A regional model focused on North America, running at approximately 3 km resolution in its nested domains. The NAM excels at short-range forecasts (12–36 hours) for mesoscale phenomena — thunderstorm initiation, frontal passages, lake-effect snow.

Strengths: high resolution, good convective-scale forecasting. Weaknesses: limited geographic domain, short forecast range.

HRRR (High-Resolution Rapid Refresh)

The HRRR runs hourly at 3 km resolution over the contiguous United States. It is the model to watch for the next 6–18 hours, particularly for convective weather. The HRRR assimilates radar data and surface observations — including personal weather station data from MADIS — making it the model most directly influenced by your CWOP contributions.

How Your Station Data Feeds Into the Models

This is the part that connects your backyard instruments to the supercomputers. The process is called data assimilation — the mathematical technique for combining a model's prior forecast with new observations to produce an improved estimate of the atmospheric state.

The Data Pipeline

- Your station reads temperature, humidity, pressure, wind, and precipitation.

- Your station software (GraphWeather, WeeWX, etc.) transmits the data to CWOP via APRS-IS.

- CWOP passes the data to NOAA's MADIS (Meteorological Assimilation Data Ingest System).

- MADIS runs automated quality control — spatial consistency checks (is your reading reasonable compared to nearby stations?), temporal consistency (did the temperature change plausibly over the last hour?), and internal consistency (is the dewpoint below the temperature?).

- Data that passes QC is flagged as usable and enters the observation pool for data assimilation.

- The assimilation system (3DVAR or 4DVAR) blends your observation with the model's prior estimate, weighted by the observation's estimated accuracy and the model's estimated uncertainty at your location.

- The improved initial state feeds the next model run.

Your single station contributes a small adjustment — but multiplied by thousands of stations across the CWOP and MADIS network, the cumulative impact on initial conditions is significant. Studies have shown that personal weather station data measurably improves short-range forecast skill, particularly for surface temperature, pressure, and humidity in areas between official observation sites.

What Makes Your Data Valuable

The value of your station data to NWP is proportional to:

- Location uniqueness. If you are the only station within 20 km, your data fills a gap that no other observation can. If you are surrounded by ten other CWOP stations, the marginal value is lower.

- Data quality. A well-calibrated, properly sited station contributes confidently to assimilation. A station with known biases (e.g., temperature reads 2 °C high due to poor siting) still has value — the bias can be estimated and corrected — but unrecognised biases are worse than no data at all. The station data sanity checks guide helps you identify and correct these.

- Reporting consistency. A station that reports reliably every 10 minutes for years builds a statistical profile that the assimilation system uses to estimate observation error. Intermittent reporters are harder to characterise and receive lower weight.

AI and Machine Learning in Weather Prediction

The traditional approach to weather forecasting — solving the equations of atmospheric physics on a grid — has been the foundation since the 1950s. It works because the physics is well understood. But it is computationally expensive, and some atmospheric processes (turbulence, convection, radiation transfer) happen at scales smaller than the grid spacing and must be parameterised — approximated with simplified equations that introduce errors.

The New Wave

Starting around 2022, machine-learning models trained on decades of reanalysis data (historical atmospheric states reconstructed from observations) demonstrated forecast skill competitive with traditional NWP models:

- GraphCast (Google DeepMind): A graph neural network that produces global 10-day forecasts in under a minute on a single GPU, compared to hours on a supercomputer for the GFS. In testing, GraphCast outperformed the ECMWF ensemble mean for several variables at several lead times.

- Pangu-Weather (Huawei): A transformer-based model that produces forecasts of comparable skill to the ECMWF deterministic run, at a fraction of the computational cost.

- FourCastNet (NVIDIA): A Fourier neural operator approach that is particularly strong for precipitation forecasting.

- NOAA's own efforts: NOAA has been integrating AI/ML techniques into its operational pipeline, using neural networks for post-processing (bias correction, downscaling), emulating expensive physical parameterisations, and developing hybrid models that blend physics-based dynamics with learned corrections.

What This Means for Station Operators

AI weather models are exciting, but they come with caveats that matter to station operators:

They are not replacing physics-based models. AI models are being integrated alongside traditional NWP, not instead of it. The physics-based models provide the dynamical backbone; AI models provide corrections, downscaling, and fast ensemble generation.

They still need observations for initialisation. AI models are typically trained to produce forecasts from a given initial state — they need that starting state to come from somewhere. Data assimilation using observations (including your station data) remains essential.

Ground truth becomes more important, not less. As models improve, the ability to verify their predictions against high-quality surface observations becomes increasingly valuable. Your station is a verification point. When a new AI model claims superior performance, the claim is evaluated against observations from stations like yours.

Local accuracy is not the same as global skill. An AI model might outperform the GFS when averaged across the globe, but underperform in your specific microclimate — a coastal zone, a valley, or an urban heat island. Your station data is the only way to assess local model performance.

Interpreting Ensemble Spread for Your Location

Here is a practical exercise for any station operator who wants to move beyond checking a single forecast number:

Access ensemble forecast data for your location. NOAA's GEFS ensemble products are freely available. Weather apps like Windy and Meteoblue display ensemble spread as temperature or precipitation probability fans.

Compare the ensemble spread to your station observations. On days when the ensemble is tight (low spread), the forecast should be accurate. On days when the ensemble diverges (high spread), expect larger forecast errors. Track this over a few weeks and you will develop intuition for how much to trust a given forecast.

Note which model performs best in your microclimate. If you live in a mountain valley, the NAM or HRRR (with 3 km resolution) will typically outperform the GFS (13 km) for temperature and wind. If you are in a flat, open area, the difference may be minimal.

Use your station data for model verification. Compare your daily high and low temperatures, precipitation totals, and wind maxima against what each model predicted. Over time, you will know which model to trust for which parameter at your location.

The Grafana dashboard guide shows how to visualise your station data in Grafana — you could extend that dashboard with a panel overlaying forecast data from the GFS or ECMWF for side-by-side comparison.

Common Mistakes

Treating AI model output as deterministic. GraphCast and Pangu-Weather produce single deterministic forecasts (though ensemble extensions exist). A single AI forecast should be interpreted with the same caution as a single deterministic GFS run — it is one possible outcome, not a guaranteed prediction.

Confusing model resolution with local accuracy. A 3 km model is not automatically 3 km "accurate." It resolves features at 3 km scale, but the accuracy at any single point depends on the quality of initial conditions, the terrain representation, and the physical parameterisations. Your station, 2 metres off the ground in your specific microclimate, experiences conditions that even a 1 km model may not capture.

Ignoring bias. Every model has systematic biases — the GFS tends to be slightly warm in certain regions, the ECMWF slightly wet. Knowing your local model biases (discovered through verification against your station data) makes you a better forecast interpreter than any model improvement.

Assuming more ensemble members means better forecasts. The ECMWF's 51 members give better uncertainty estimates than the GEFS's 31, all else being equal. But the quality of the perturbation method and the base model matter more than the member count.

Neglecting the value of long observation records. AI models are trained on 40+ years of reanalysis data. Your station's multi-year record, if consistently maintained, becomes part of the observational fabric that validates and improves these models. A station that has been running for ten years with quality data is immensely more valuable than one that started last month.

Related Reading

- Publishing overview — the broader context of getting your station data out into the world.

- METAR and CWOP basics — the data standards and formats that feed into the assimilation pipeline.

- Station data sanity checks — ensuring your data quality meets the bar for scientific contribution.

- Sharing your data — setting up CWOP and other network contributions that feed the models.

- Publishing beyond WUnderground — expanding your station's reach to maximise scientific impact.

- Build a Grafana dashboard — visualising your station data for model verification.

FAQ

Does my personal weather station data actually make it into the GFS or ECMWF? Your CWOP data enters MADIS, which feeds into the GFS data assimilation system. The ECMWF has its own observation ingestion pipeline and also accesses MADIS data. Whether your specific station's data influences a given model run depends on the data assimilation system's quality control decisions and the observation density in your area. In data-sparse regions, a single station can have measurable impact.

How can I tell if my station's data was used in a model run? MADIS provides a station-level quality report that shows which observations passed QC and were available for assimilation. You cannot directly confirm that a specific model used a specific observation, but if your data passed MADIS QC, it was available to every model that ingests MADIS data.

Are AI weather models available for personal use? Some are. GraphCast's code and weights are open-source. Pangu-Weather's weights have been released. Running them requires significant GPU resources (a high-end consumer GPU can produce a single forecast in minutes), but the barrier is dropping rapidly. For station operators, the practical value is in consuming AI model forecasts (via apps and APIs) rather than running the models yourself.

Will AI models make personal weather stations obsolete? No. The opposite. AI models are trained on and verified against observations. More stations, better data, and longer records make AI models better. The models produce forecasts; stations produce observations. They are complementary, not competitive. If anything, the AI era increases the value of quality surface observations because the models are now skilful enough that verification data must be equally high quality to distinguish between them.

How often do models update their data assimilation to include new station data? The HRRR assimilates new data every hour. The GFS runs data assimilation four times daily (00Z, 06Z, 12Z, 18Z). The ECMWF runs it twice daily (00Z, 12Z). So your station data, if submitted to CWOP every 10 minutes, is available for the next assimilation cycle — within 1–12 hours of observation, depending on the model.